Bias Compounds, Variance Washes Out

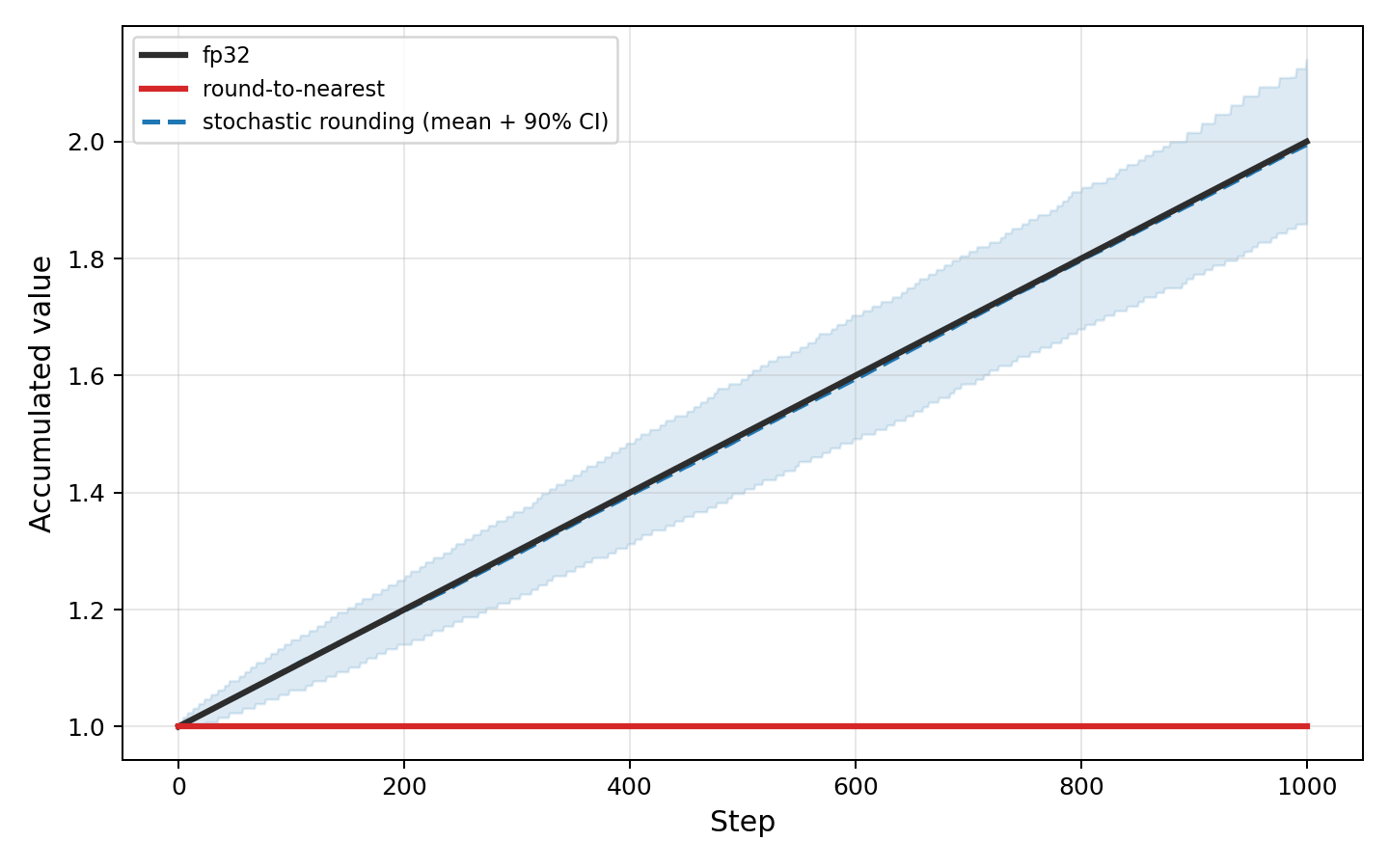

Round-to-nearest makes the same rounding error every time. Stochastic rounding makes a different error each time, centered on zero. When the same error repeats, it compounds. When errors are zero-mean, they partly cancel.

Add 0.001 to 1.0 a thousand times in BF16 and round-to-nearest never moves. Every update falls closer to 1.0 than to the next representable value, so every update rounds back to 1.0. Stochastic rounding reaches 2.0. Each update rounds up with probability proportional to where it falls in the rounding interval. In expectation, the sum is exact.

Over $n$ steps, biased errors grow as $O(n)$, but unbiased errors grow as $O(\sqrt{n})$.

The variance diffuses like a random walk, growing with every step. But it grows slower than bias, and over long runs of small updates, that difference is everything.

The Experiment

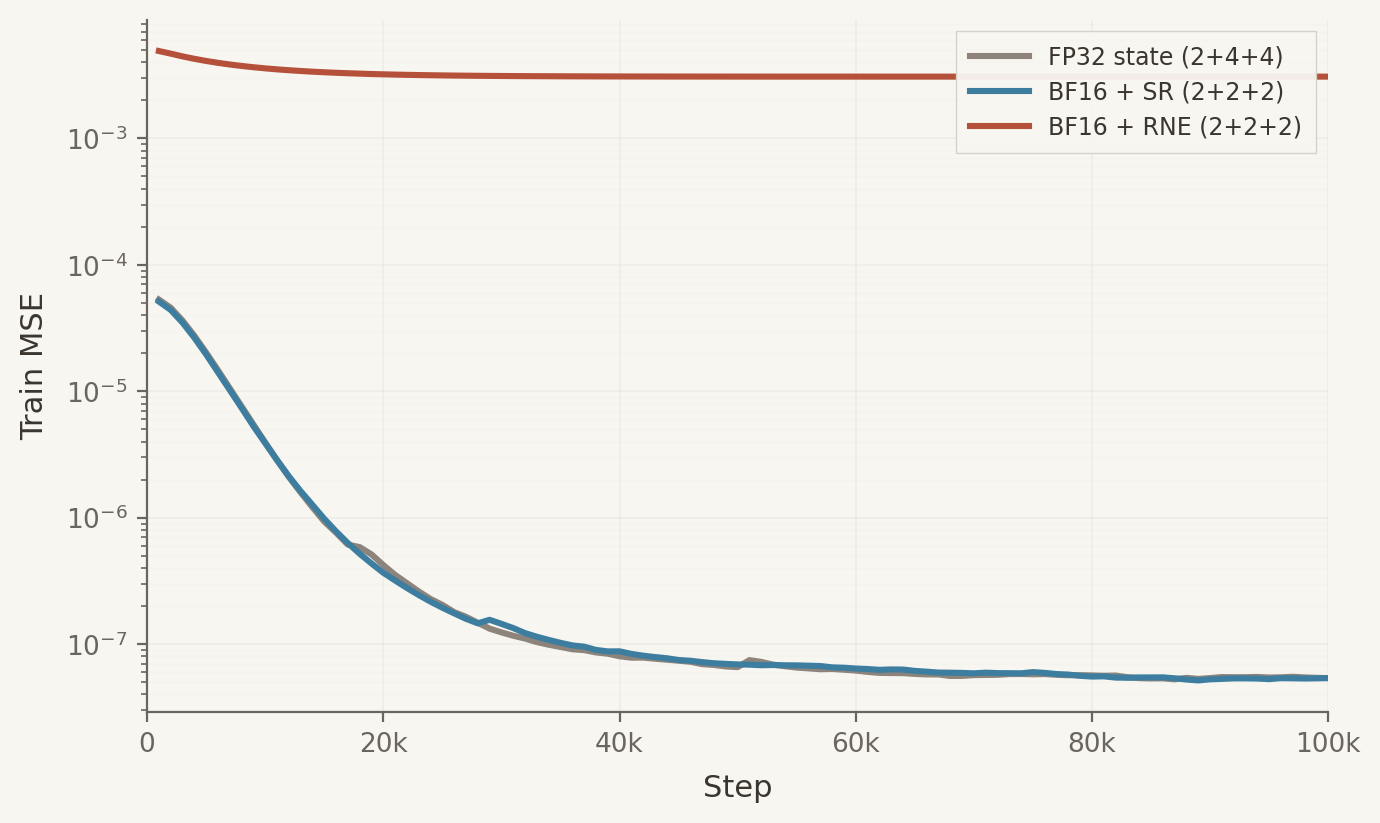

A small MLP trained on a teacher-student regression task using HeavyBall’s AdamW. All configs store parameters in bf16. The experiment varies the rounding mode for optimizer state.

BF16 + SR (6 bytes for parameters, first moment, and second moment) matches FP32 state (10 bytes). The per-step updates are noisier, but the noise is unbiased and washes out over training. BF16 + RNE (6 bytes) plateaus orders of magnitude above. The same errors repeat, and the loss stalls.

Stochastic rounding replaces round-to-nearest inside the optimizer kernel, adding no memory and no bandwidth.

Remove the bias and six bytes match ten. Leave it, and six bytes hit a wall.

Corrected 2026-03-16. Results rerun after fixing a torch.compile fusion that eliminated bf16 round-trips in the original experiment.